Measuring Process Adherence: The Small Sample Insight

My IHI colleague Dr Don Goldmann recently described his practice as an experienced epidemiologist and improvement scientist: if a health care team claims to ‘always’ include specific documentation for a set of patients, go grab ten charts and find out. If you see only one chart with the expected documentation, claim refuted! Next step: Help the team to generate ideas to improve performance and get them to test those ideas to assess impact.

Don summarized his practical method in an email discussion of a recent paper by Etchells and Woodcock, “Value of small sample sizes in rapid-cycle quality improvement projects 2: assessing fidelity of implementation for improvement interventions”, BMJ Qual Saf 2018; 27:61–65.

The authors remind readers that if you aim to have reliable adherence to a protocol—they give an example of 80% compliance—“…small samples of 5-10 patients may be large enough to demonstrate a gap in care.” See the technical note below for basic probability arithmetic that supports this statement.

Application to the What Matters Project

I discussed the ‘What Matters’ project in my last post.

There are many compelling reasons to focus on ‘What Matters’. If the aim is to have care teams learn about ‘What Matters’ to their patients, question two of the Model for Improvement then asks how the teams will know if changes are leading to greater understanding and use of ‘What Matters’ in care.

Question two is the measurement question.

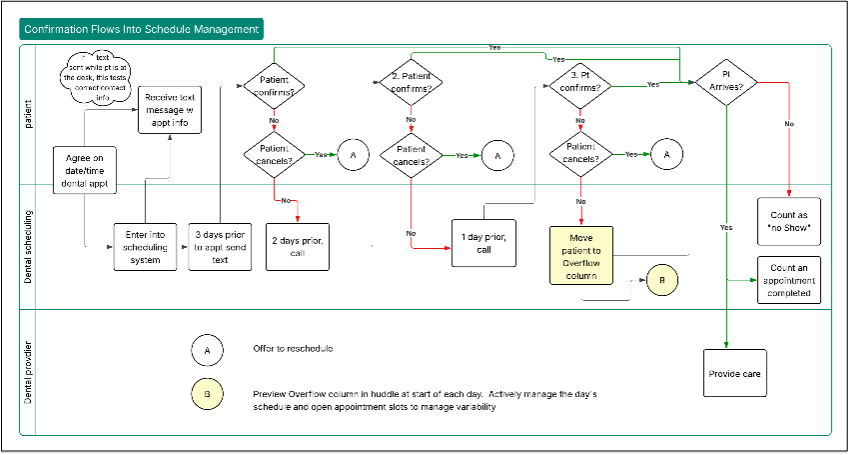

The workflow to document What Matters is under the control of the hospital unit, primary care clinic or ED in our project. Our proposed process measure, “Documentation of What Matters”, tracks adherence to this agreed-upon workflow. To make this measure practical, it’s crucial to minimize measurement burden for hospital units, clinics and EDs that are learning how to focus on What Matters.

When the hospital unit, clinic or ED has defined a workflow to engage reliably with patients about What Matters and document the information, we would expect high performance on the process measure, at 90% or above. (I argue here that process measures of adherence could have a very high goal of 100%.)

Let’s apply the small sample insight.

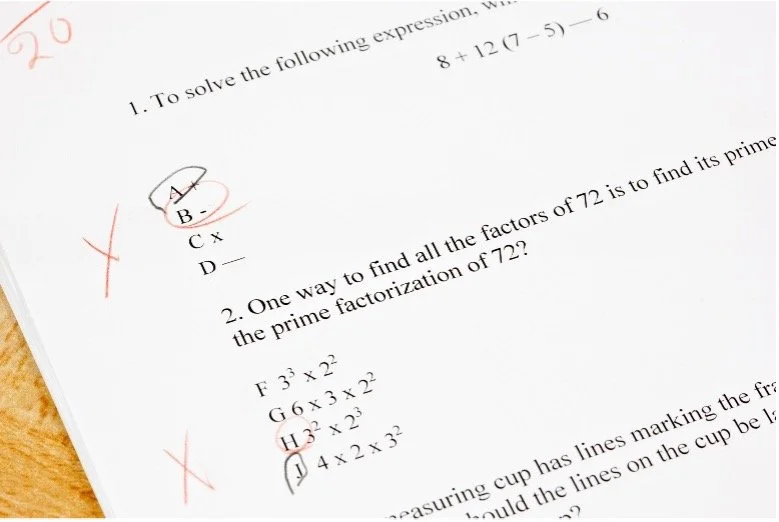

With a target documentation reliability at 95%, you don’t have to examine many records to tell if you’re missing the target. If you have a random sample of ten charts, as soon as you see three failures, you can conclude that the process is below target.

It’s a better use of your time and limited resources to observe the What Matters engagement and documentation and test changes, measuring the documentation as part of the test, than to seek a precise estimate of process performance based on a large number of records.

The Tally Option

Rather than batching the calculation of a measure like ‘Documentation of What Matters’ in a weekly sampling of charts, it’s even better to track performance in real-time, discussed here. Tracking in real-time then practically aligns with observing performance in specific tests of change.

Probability Arithmetic Technical Note

In a batch of 10 records randomly selected from a big batch (100 or more), if you see three records that fail to show correct documentation and you have a target reliability of 95%, you can conclude you need to improve your work processes.

Examining the records sequentially, you can stop auditing as soon as you hit three failures. With three failures, you’ve got pretty strong evidence that the system is not working to target. Five or more failures out of 10 is astronomically unlikely in a system that is 95% reliable.

Here’s the picture again:

The tiny type in the subtitle refers to the binomial model. This model requires an ‘independence’ assumption: the quality of the documentation of one chart is not affected by the quality of another chart. In other words, we expect no unusual clumps of failures. If you take the trouble to draw a random sample of records, the physical act of randomization will tend to break up any clumps.

You can justify the binomial model as a useful approximation if you can randomly select the 10 charts from a large list of charts (100 or more). For example, from a list of 200 charts, pick a random number between 1 and 200/10=20. I like to go to www.random.org to pick a random number; the site uses atmospheric noise to generate ‘true’ random numbers. I just did this and got the number 14. Now pick your ten charts from the list to form a random sample of 10: take the 14th, 34th, 54th, …, 194th.

In some settings, a sample of 10 records will be a large fraction of weekly or monthly records. Then the appropriate random sampling model is the hypergeometric model. However, as the hypergeometric model has smaller variance than the binomial model for the same sample size and probability of success, the binomial model will provide a conservative count of failures. The hypergeometric distribution for same sample and same reliability will have lower probability than the corresponding binomial distribution for a given number of failures. For the basic formulas, see Wroughton J and Cole T, Distinguishing Between Binomial, Hypergeometric and Negative Binomial Distributions, Journal of Statistics Education, 2013;21;1-16 www.amstat.org/publications/jse/v21n1/wroughton.pdf accessed 2 June 2018.

I created a Shiny app to calculate probabilities for record sets between 5 and 30 and reliability levels between 0.9 and 0.995 if you want to explore a bit, here.