Do clinical changes cause better outcomes for patients with diabetes?

I’m working with a clinical team that is redesigning care interactions to improve health outcomes of patients with diabetes. The primary outcome measure is hemoglobin A1c, an average level of blood sugar over the past two to three months.

Of course, the level of engagement shown by each patient appears to affect the degree to which the clinic can apply their bundle of changes. The level of engagement also affects A1c, mediated through diet, exercise, and adherence to medications.

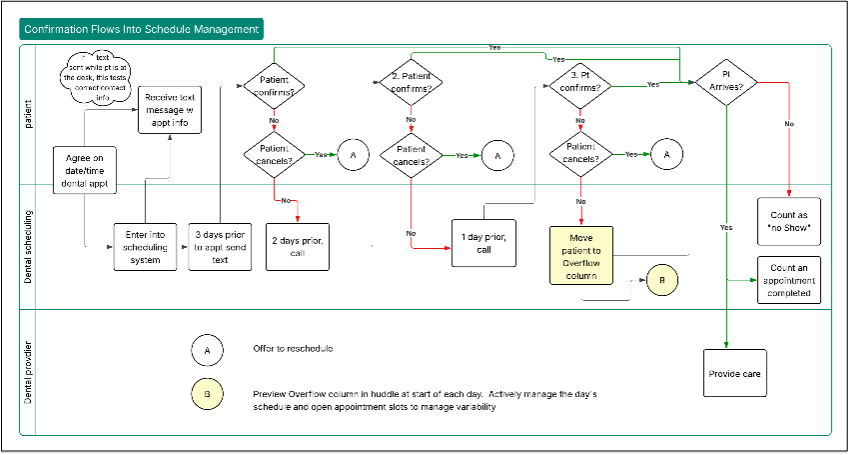

The diagram shows a simple picture, a causal diagram, that can make explicit our mental model of cause and effect.

Under what conditions can we say that the team’s changes cause improvement in A1c?

According to Judea Pearl, the standard mind-set, language and methods of statistics don’t provide a formal, structured way to answer that question. That’s the case even if a randomized experiment is designed and applied.

If we observe better outcomes, Pearl says we lack a structured causal language to inform our analysis. We get stuck at the level of association between changes and outcomes, guided by the lesson from Statistics 101 that “correlation does not mean causation.”

Research and Application of Causal Tools

Over the past 30 years, a modern research program led by Pearl has generated methods and perspectives for causal inference that have attracted the attention of applied scientists across a range of domains. With co-author Dana McKenzie, he recently published a relatively non-technical summary of this research, The Book of Why (2018, Basic Books).

To develop a mathematical language for causality, Pearl combines traditional tools of statistics with a methodology of causal graphs like the diabetes diagram. The glue between statistics and graphs is a structured set of rules called the “do-calculus.”

Causal diagrams and formal rules that translate such diagrams into summaries of observations can help scientists, engineers, and system managers characterize knowledge and develop specific statements about causes and effects.

A couple of weeks of study have gotten me here:

- Causal diagrams look like a useful extension of my tool set. While driver diagrams and cause-effect diagrams have the same structure as Pearl’s causal graphs, Pearl’s graph theory makes confounding factors explicit and allows you to describe causal models more nuanced than driver diagrams.

- Pearl distinguishes between observational studies and experiments, by which he means tests that use randomization to break statistical dependence between an intervention and potential confounding factors. In observational studies, causal diagrams and the do-calculus allow an analyst to determine the degree to which causal statements can be justified and estimated.

- Quality improvement always involve one or more changes on the production process or system, usually without randomization. For now, I think that typical QI interventions fall into the observational study bucket in terms of Pearl's causal tools, despite the fact that there's a conscious decision to change one or more variables to new levels.

Study Sources

The Book of Why is a relatively gentle introduction to causal inference. Causal Inference in Statistics: A Primer by Pearl, Glymour and Jewell (2016, Wiley) makes more demands on the reader--you should read with paper and pencil and try the exercise problems. This book, in turn, is a simplified version of Pearl's more comprehensive Causality, (2nd edition, 2009, reprinted with corrections 2013, Cambridge University Press). Pearl’s website has links to many articles, tech reports and lectures. His blog has lively discussion of a range of technical statistical and philosophical matters related to causality.

Link to Deming’s Distinction

W.E. Deming outlined two classes of problems, enumerative and analytic. His 1975 article “On Probability as a Basis for Action” (The American Statistician, Vol 29, No 4, pp. 146-152) is a good introduction.

As I noted in a 2016 post:

Enumerative studies focus on characterizing a particular situation, for example, the properties of patients seen by an organization in one month. We are concerned with counting or assessing attributes of those patients. We are not concerned with the system of causes that generated the pattern of attributes exhibited by the population.

On the other hand, analytic studies purposely focus on a system of causes; calculations of a specific instance help the analyst gain insight into that system. Typically, analytic studies inquire about the behavior of a system of causes over time. A key question in analytic studies is whether the system of causes remains essentially the same over time; if so, analysts may make a prediction about future performance, with the prediction derived from study of past performance.

Deming’s distinction as far as I can tell had relatively little influence on the teaching of statistics in the last half of the twentieth century though Deming argued his case with enthusiasm and clarity.

On the other hand, the distinction shaped the understanding of my colleagues at Associates in Process Improvement (www.apiweb.org) who integrated Deming’s views into their thinking and practice; in turn, they shaped the core improvement methods applied by the Institute for Healthcare Improvement (www.ihi.org).

Deming’s warnings about the provisional nature of our knowledge in the solution of analytic problems and limitations of probability methods in prediction still demand our attention. Pearl’s willingness to use the concept of population would have triggered Deming’s criticism in some cases. Nonetheless, Pearl provides a structured theory that appears relevant to analytic studies and deserves more of my time to understand.

A causal answer to The Improvement Question

If the causal diagram captures the relationships among the variables, then we can estimate a causal effect so long as we have data on the level of each patient's engagement as well as data on which patients got the bundle of interventions and of course, patient measurements of A1c. If we have no observations of patient engagement, we can't make a causal statement.