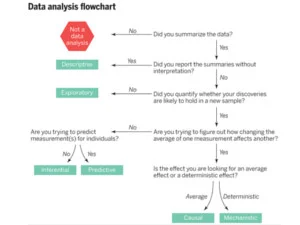

It’s Tough to Make Predictions, Especially about the Future ... but It’s Worth the Effort

In the course of planning a test to measure the impact of workshops based on The Conversation Project, my colleague asked why I wanted us to predict what would happen when we ran the test.

A good question!

In our case, the proposed test asks participants in an upcoming Conversation Project workshop a specific set of questions. We want to learn how to ask the questions efficiently and effectively.

Asking for an explicit prediction caused us to reflect on our current degree of belief and how much we know about how to ask the questions and analyze participant answers.

Early on in exploring a system, we’re often uncertain, our knowledge of the system under study may be relatively weak: e.g. “I really don’t know what will happen, just about any result would be possible.” That’s pretty much where we are right now in our measurement of The Conversation Project but we’re committed to learning fast—we’ve got one test underway right now and two more in planning.

On the other hand, strong belief means something like, “I am confident I can tell you precisely what will happen: When we do x, we will see A.” If results match predictions over a series of tests, that’s a sign we know our system well.

Making a prediction helps both before we run the test and after we run the test.

Benefits before the Test

• Predictions imply measurement—an answer to the question, “how will we know if our prediction holds up?” Asking for a prediction is an indirect way to get us thinking about how to measure the impact of our test. Measures help make our tests meaningful and communicable.

• Predictions help us judge and refine our plan for the test. Do we have all the ingredients lined up to be able to assess if the test will increase our knowledge?

Benefits after the Test

• We can see if the test in fact increased our knowledge. I’ve observed a tendency in myself and others to say “of course I knew that” after an event but frequently our memories and beliefs are affected by the most current experience—to reduce self-deception, state a prediction before the test.

• Look at the increase in knowledge, after vs before the test. Was it worth the effort to run the test, given what we learned? This reflection helps us to get better at running tests efficiently.

If our predictions match the results really well in a series of tests, that’s our signal that we know what we’re talking about. We can use what we’ve learned to help others. However, before declaring “we know what works” we should test the candidate changes under a range of conditions to deepen our knowledge. In our Conversation Project tests, what appears to work with ethnically homogeneous, middle-class, college educated participants may fail to work with other audiences. So our advice about what to do will require nuance and multiple versions to perform well in a range of situations.

Finally, there may be neuropsychological evidence that contrasting an explicit prediction with actual results (especially when the predictions don’t match the results) wakes up our brains chemically and primes us to learn. (C. D. Fiorillo, P. N. Tobler, W. Schultz, “Discrete Coding of Reward Probability and Uncertainty by Dopamine Neurons,” Science 299,1898 (2003).)

So, while it's tough to predict the future, it's worth the effort.