Evidence for Causing an Effect

I work with a care team that aims to improve the health of a targeted group of patients with diabetes. The primary outcome effect is the level of hemoglobin A1c, summarized as the percentage of patients with A1c ‘in control’ (e.g. A1c < 8). An intermediate outcome effect is percentage of patients with a current measure of A1c, defined as a lab value within the last 12 months in the electronic medical record.

An important change in the delivery of care is strength of relationship between the care team and the patient. A measure of the relationship is the number of ‘touches’ between the care team and the patient.

Preliminary tests with increased number of touches have convinced the care team to persist and apply the change as a regular part of care.

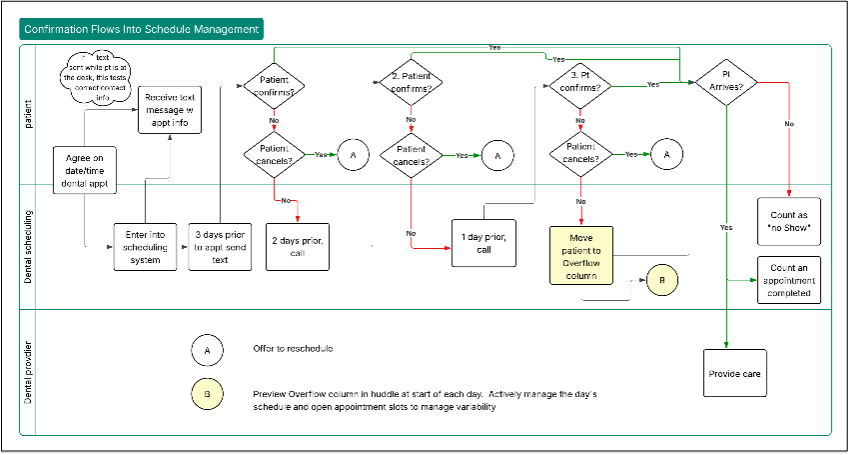

In specific clinics after preliminary testing, all patients in the target group are supposed to experience the high touch care. The improvement team and local managers need to monitor adherence to the new workflow and impact on the outcomes. I discussed adherence in this post.

We typically construct a run chart or control chart to reveal whether the work system has shifted in the good direction; we annotate the chart with the date of the work system change.

Here’s an example of a run chart that shows an association between the system change and the measure of current A1c (chart based on real data but the before and after change difference is exaggerated to focus discussion on the fundamental points).

Comparing median percentage of patients with current A1c before and after the change gives an estimate of effect of about 34%. Does this chart show that the change starting at week 7 has caused the improvement in the percentage of patients with current A1c? The answer depends on our understanding of the causal system.

Answering the Causal Question

Consider three causal directed acyclic graphs (DAGs) that may describe the diabetes care system.

To specify the DAGs, let these three nodes be binary: (1) Care Team decides to apply the high touch care method or not; (2) High touch care method is applied or not to an individual patient; (3) HbA1c measurement is current or not.

The unmeasured ‘Patient Nature’ node represents a collection of variables that include receptivity to the care team’s outreach and physiology/epigenetics that affect level of HbA1c.

Finally, the diagrams have a cause labeled “Week 7”, which is a marker for unmeasured factors that may vary at the same time as the change in care. The time-stamp is a label, not likely to be a cause itself in any circumstances I could think of. Analysts use time-stamps regularly in analysis of run charts or control charts to match unusual patterns in the data series with system events. So, I’ll use the Week 7 time-stamp here in the same spirit.

If the causal system is represented by Case A or case B, then we cannot estimate a causal effect directly by the difference in medians before and after the change. If the system is described by Case C, then the difference in medians estimates the causal effect.

In Case A, Patient Nature affects whether the patient experiences Hi touch care, shown by the arrow between Patient Nature and Hi touch care. For example, patients who have a better experience with the care team may be more likely to respond to outreach and in turn get more touches. In DAG terms, there is a ‘backdoor’ path from outcome through Patient Nature to the change in workflow.

In both Cases A and B, there is an arrow from Week 7 to Patient Nature. This means that we allow for the possibility that some unmeasured factors have changed starting in Week 7 that have affected the patients receiving care. The patients after the change may differ in important ways from the patients treated before the change. A strong seasonal pattern in the health of patients treated by the clinic aligned with the change to adopt Hi touch care is an example of confounding that undermines a claim of causal effect.

Again, a backdoor path from the effect Current A1c and the change Hi touch care means there is confounding. The DAG makes this confounding easy to see.

In Case C, the DAG shows no backdoor paths from the effect to the proposed change. This DAG asserts that Patients seen before Week 7 are just like Patients seen starting in Week 7 on all factors that might affect whether they experience Hi touch care. In causal inference language, patients are ‘exchangeable’ before and after the change.

The improvement team must argue for case C in asserting a causal effect; the run chart itself is compatible with all three cases.

Management Control and Causal Effects

Management actions can reduce or eliminate some confounding. Design of care to apply the high touch approach to all patients seen by the care team removes or at least weakens the arrow between Patient Nature and Hi Touch care. If the Hi touch care change gets adopted by multiple care teams, standardizing work to reduce care team to care team variation will attenuate possible confounding (back door paths) involving a care team factor.

Confounding of Patient Nature with the time of the change at Week 7 is more of a challenge. While people close to the work may have insight into whether the patients seen after the change are different in important ways from patients seen before the change, continued monitoring of performance will be necessary to discount likelihood of time-associated confounding. On the other hand, if the change is adopted by multiple care teams starting at different times, with similar effects, then there is more evidence that timing of the change does not influence the improvement.

Summary: Causal Inference and Improvement

Care teams and their advisers want to know whether a change causes improvement so they can sustain and spread effective practices.

When we use a run chart or control chart to monitor explicit changes aimed at improvement, we look for a signal of special cause that corresponds to the time stamp of the change in workflow. We need make a causal argument to determine whether that special cause signal is causal. The charted data by themselves do not suffice to justify a causal interpretation.

A causal DAG is a practical way to summarize a causal argument, relatively easy to communicate and understand by care teams and their advisers.

If a causal DAG justifies a causal interpretation of the signal from the control chart, then the signal of an assignable or special cause in the Shewhart/Deming sense aligns with an estimate of causal effect as defined in modern causal inference.