When Purple Becomes Blue: Perception Changes With Prevalence

David LeVari and co-authors summarize a series of experiments in last week’s Science that reveal another property of how humans appear to think. (link here)

When things change, even for the better, our new assessments may drift, seeing more problems than might really be present.

To demonstrate this phenomenon, the psychologists started with a basic perception challenge.

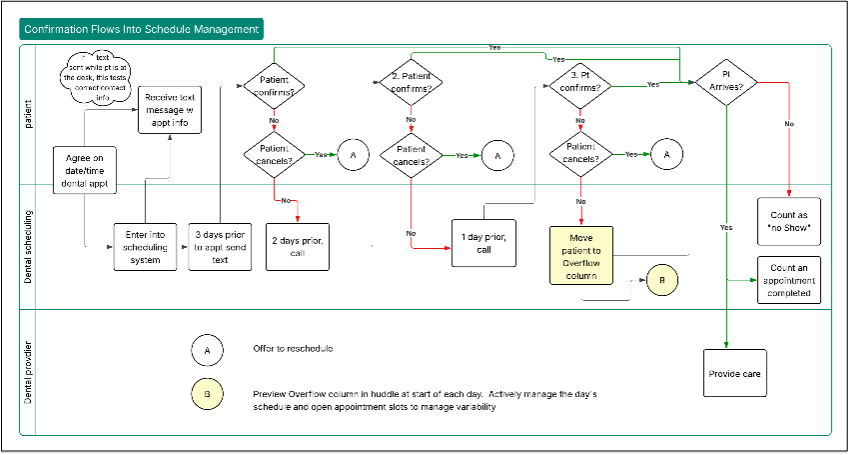

Can a person distinguish a blue dot from a purple dot? The psychologists asked each tester to label a dot presented on a computer screen as “blue” or “not blue”. Dots were randomly generated from a specific “blue” spectrum or a specific “purple spectrum”. After a bit of practice, each tester assessed 1000 dots.

In the control group of testers, the chance of getting a blue dot on any of the 1000 trials remained the same, 50%. The assessment by the control subjects of the per cent of dots identified as blue was essentially the same in the first 200 trials as the last 200 trials.

In the experimental group of testers, the researchers reduced the proportion of blue dots in stages after the first 200 trials; for trials 351 to 1000, the chance of getting a blue dot was reduced to 6%. Compared to the first 200 trials, the final 200 trials for the experimental testers show an obvious shift in judgment. Dots from the purple spectrum that were counted as “not blue” in the first 200 trials were more likely to be counted as blue in the last 200 trials.

“In other words, when the prevalence of blue dots decreased, participants’ concept of blue expanded to include dots that it had previously excluded.”

The psychologists extended the initial color experiment in many interesting ways.

First, they did more dot experiments. They told testers that the likelihood of a blue dot would decrease, they offered monetary rewards for better performance, they made the decrease in probability of a blue dot abrupt rather than gradual. They even reversed the challenge, increasing the proportion of blue dots.

They then replaced the dot assessment challenge with assessment of aggressive faces and assessment of ethical research proposals.

The drift phenomenon persisted across these extensions: “Across seven studies, prevalence-induced concept change occurred when it should not have.”

The psychologists conclude: “…our studies suggest that even well-meaning agents may sometimes fail to recognize the success of their own efforts, simply because they view each new instance in the decreasingly problematic context that they themselves have brought about. Although modern societies have made extraordinary progress in solving a wide range of social problems, from poverty and illiteracy to violence and infant mortality, the majority of people believe that the world is getting worse. The fact that concepts grow larger when their instances grow smaller may be one source of that pessimism.”

Link to Operational Definitions

The perception creep problem arises because it’s hard to hold a fixed concept—whether the concept is blue color or aggressive appearance or ethical research.

“…research suggests that the brain computes the value of most stimuli by comparing them to other relevant stimuli; thus, holding concepts constant may be an evolutionarily recent requirement that the brain’s standard computational mechanisms are ill equipped to meet.”

As discussed in last week’s post, an operational definition enables the translation of a concept into practice and give us a way to help our brains do a better job in assessing and judging dots, faces and research proposals.

“An operational definition is one that people can do business with. An operational definition of safe, round, reliable, or any other quality must be communicable, with the same meaning to vendor as to purchaser, same meaning yesterday and today to the production worker.” (W.E. Deming, Out of the Crisis, MIT Center for Advanced Engineering Studies, Cambridge, MA 1986, p. 272).

If we want to communicate “blue”, what could we do? At minimum, we could keep an exemplar blue dot on the computer screen so the tester can compare the trial dot to a single standard dot.

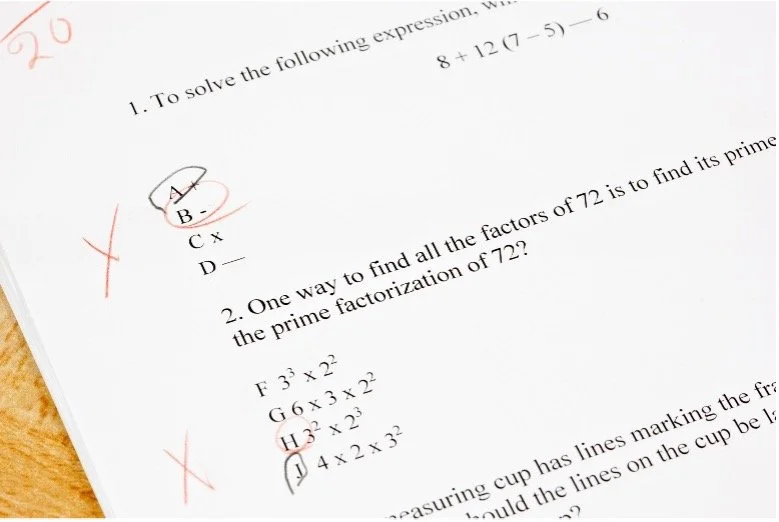

Even better, we can provide testers with a scale of purple to blue. The six dots here are from figure S1 in the supplementary materials to the Science article; I added the division line and the labels.

Now the task “determine if the trial dot is blue” uses the scale as the criteria. The test requires the subject to compare the trial dot to the scale, taking advantage of our natural skill at comparing instances and items to detect differences. If the color appears to be in the purple half of the scale, declare the dot “not blue”; otherwise, declare the dot “blue.”

I predict that the prevalence-induced concept change would attenuate if not completely disappear for testers given this operational definition of “blue.”

Beyond Blue: What can we do?

I admit that the other concepts in the paper--“aggressive appearance” and “ethical research”--present more challenge in development of operational definitions than “blue.”

What can we do for those concepts? The background for the psychologists’ trials appear to have the right ingredients to craft operational definitions. Start by building a set of example items that illustrate the concept and its negation. Order the examples into a scale and mark the scale to show which items fit the concept and which do not, analogous to the color dot scale. Then use the scale as reference to decide whether new items match the concept or not.

To check on perception drift, challenge testers with instances previously judged; track whether the agreement between current and previous judgment is stable in a control chart sense. Instances near the middle of the scale will drive a more sensitive assessment of drift.